Introducing the Task Artistry Index

Eliciting A.I. Individuality

Aristotle makes a difference between two kinds of thinking: thinking relating to things that cannot be otherwise—knowledge—and thinking about things that are capable of being otherwise—art, and practical judgment1. In common parlance, we say that the former kind of thinking has objective results whereas the latter kind is more personal and subjective. What's the quickest route for driving from NYC to Philly? Objective. What's the most scenic route from NYC to Philly? Subjective. From the kind of answers possible for the first question, we can't really tell much about the answerer beyond how good they are at mapping. But from answers to questions of the second sort, we can discern a bit of the answerer's personality.

Similarly with A.I. systems, some tasks given them disclose their individuality more than others. I propose a way to measure this variation, introducing a metric called the Task Artistry Index.

To first develop some intuition, consider the following two A.I. tasks:

a) Speech-to-Text, which takes an input audio clip containing spoken words and returns the associated text transcript as output. cf. OpenAI's Whisper.

b) Text-to-Speech, which takes a block of text as input and generates audio as output, with the text read out aloud in a synthesized voice. cf. Coqui.AI

Now the correct outputs for the first task are fairly deterministic. Asides from minor choices of punctuation (e.g. commas) and alternate spellings (OK vs. okay), there's not much scope for different correct outputs given the same input. But for the second task, Text-to-Speech, choices abound: what voice to use, how quickly to read, where to pause, which words to emphasize, what affectation to put on, and so on. One can make an art of it. And we do: witness the Emmy Award for outstanding voice-over performance.

The Task Artistry Index formalizes this into a quantitative metric:-

TAI = average over potential inputs[ log(multiplicity of equally correct outputs) ]

Let's discuss the three steps in the formula above one at a time. Skim if too technical!

1. Equally correct outputs

It's no good to count various poor solutions to a given question when trying to measure the subjectivity of the task. So we need to set some standards, and count only the outputs that meet them. For the navigation task, to return the quickest driving route between two places, we can count all potential answers within, say, 5 percent of actual time taken.

2. Log-multiplicity of above

If there are two good answers to a given question, but there is significant similarity between them, they oughtn't count as two but rather something between one and two. Taking the navigation task again, if two equally good routes A and B overlap half the way, we can say the true effective count of good routes is 1.5. There are statistical methods to compute this formally, calling the effective count multiplicity. We then take the logarithm of this value (log(1.5) in this case) to express the multiplicity as information content (cf. coding length in bits from information theory2).

3. Averaging over above

We can compute the above log-multiplicity for every potential input to the task (for the navigation task, for every pair of places A and B). To arrive at the TAI, we then average over these log-multiplicity values. We perform a weighted average where the weights are the distribution density of the inputs (how likely each pair is typically asked for—so less likely pairs count for less in the average).

Tasks besides Speech to-Text that would score very low in TAI, possibly as low as 03, include face recognition, speaker identification (audio), email spam detection, transaction fraud detection, and OCR/handwriting recognition. Low but not quite 0 TAI tasks will be tasks like navigation, image classification, some game playing, recommendation engines, and simple natural language tasks such as topic detection.

Medium artistry tasks could include image captioning, other game playing, and more difficult natural language tasks such as text summarization and Q&A. Autonomous driving likely belongs in this list as well.

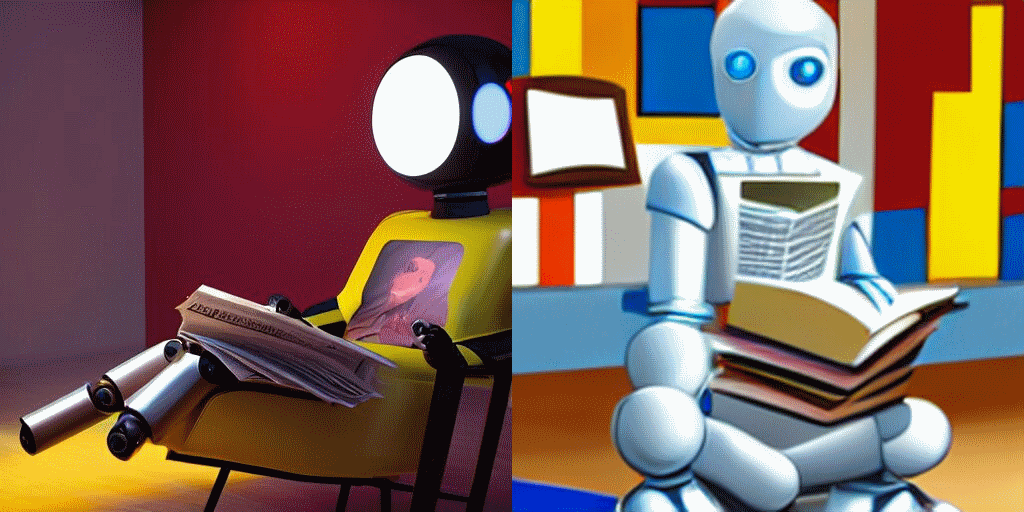

Lastly, high artistry tasks are those where the outputs given an input can be rather unpredictably diverse while still being correct: image synthesis ("draw me a robot in a coffeeshop reading a book"), conversational AI ("I am looking for a fun summer vacation. Can you help me decide an itinerary?"), and text synthesis ("tell me a joke about A.I.").

Fig. 2: High task artistry—Two AI systems drawing “a robot in a coffeeshop reading a book” very differently

Tasks scoring high on TAI afford creativity and individuality from their solvers. Given some time with a few different A.I. systems that handle the same high artistry task, e.g. DALL-E and Stable Diffusion, one can identify which system produced which answers based on their distinctive styles. Just like how one can tell apart the music of Beethoven, Haydn and Brahms when given several bars of music from each.

We appear to consider creativity and individuality as universal attributes of all humans. I expect we would insist A.G.I. to have it too, and consequently to handle high TAI tasks well. But while being able in this way might be necessary, it might not be sufficient for an A.I. system to be considered A.G.I.—a rational being on par with humans.

For in handling these tasks there is room for bias and bad ethical choices that the system can be oblivious to. A Text-to-Speech engine can choose an angry, menacing tone that might technically count as correct (and creative even) but frighten and put off the listener. It thereby displays poor judgment, without even knowing that there is such a thing as good judgment. That is why in these cases we tend to blame the human engineers responsible for the A.I. system rather than the A.I. itself4.

In other words, art is not enough. According to Aristotle, while good art evinces a "true rational understanding that governs making"5, there is no virtue associated with it. Because making art is not an end in itself, and one can excel at making bad art6. And in his framework, the work of a human being is informed by reasoning that involves virtue. He calls the prime faculty here practical judgment.

That would be a topic for another day. I'll conclude summarily that I've proposed in this post a metric called the Task Artistry Index. It helps measure how much scope a task has to elicit individuality out of an A.I. system.

Thanks to Bruce Parker for reading a draft of this.

Nicomachean Ethics, Bk 6 Ch 3. trans Joe Sachs.

Pattern Recognition. Christopher Bishop, 2006. Section 1.6.

When all inputs have only one possible correct output, TAI = log(1) = 0.

Why DeepMind isn’t deploying its new AI chatbot — and what it means for responsible AI. VentureBeat, Sep 23 2022.

Nicomachean Ethics, Bk 6 Ch 4. trans Joe Sachs.

Nicomachean Ethics, Bk 6 Ch 5. trans Joe Sachs.