How to Practice Being Human

If AI can be trained to be more human-like, perhaps so can we

"What exactly am I doing here?" I began wondering, in the middle of an hour-long silent sitting in the company of mostly strangers. We were gathered on a Sunday morning in an old building with a large central hall, that had hosted this tradition going back to the 18th century. I am no stranger to meditation, having begun a daily practice in the mid-00s. But in the context of this substack, the standard answers from my past meditation teachers did not quite wash. In these essays, we explore how human persons are special, and what abilities AI needs to learn to attain unqualified personhood. But in the meditation philosophy I was taught, the person is considered a conceptual prison to escape from—by learning a letting-go of self-identification.

As I continued sitting quietly, I caught the gaze of a middle-aged man sitting across the room from me, on a long bench facing the center of the hall just like the one I was trying not to slouch on. There was nothing extraordinary about him, and we did not nod or communicate in any normal sense of the word. But I felt a solidarity conjure between us. And that appeared to me as a possible answer to the question that had arisen: I was here practicing tapping into that formless connection between persons.

With recent advances, AI is likely to corner the objective, scientific repertoire of tasks at hand. So perhaps our focus ought move to better understand the subjective, humanistic repertoire of activity and work on excelling there. The knowledge in the works of Shakespeare and Beethoven is better extracted and expanded upon by humans than by machines.

Can we clarify this notion? And are there ways to practice this knowledge, this knowledge of what it means to be human? And can AI follow suit, chasing after us?

What is a Human

Science answers "What is a human being?" with something like "An organism belonging to the species Homo sapiens." That is accurate in the same way as saying that the Mona Lisa is a collection of paint pigments on a canvas hanging on a wall in Paris1. Or that ChatGPT is a sequence of matrix multiplications on words represented as lists of numbers.

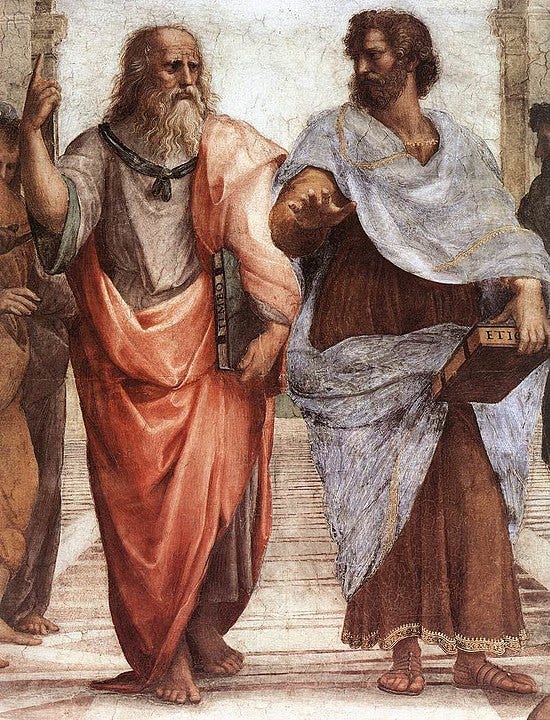

The human person is not intelligible in the scientific context that explains the human body. In the humanistic context exist rights and responsibilities, hopes and fears; friends and rivals, gratitude and resentment—concepts we intuit, but struggle to define precisely. Nonetheless, philosophers have examined a variety of facets special to humankind2. Let's consider one of Aristotle's3:

The source of action, then, is choice... while the source of choice is desire combined with a rational understanding... Thinking itself moves nothing... choice is either intellect fused with desire or desire fused with thinking, and such a source is a human being.

For Aristotle, humans are the begetters of choiceful action4. This is in distinction from animals who have no choice but to be led by their biological desires. Pet housemates may disagree (I am sure Meena is choosing to come snuggle at my feet), but pets are human-adjacent (literally, in the case of Meena right now) furry critters that deserve their special pass.

Immanuel Kant developed Aristotle's conception of choice into a human person's freedom. He then argued that the idea of a free person leads to the moral injunction he dubbed the categorical imperative: "act only on that maxim which [one] can at the same time will as a universal law"5. This is a smart-ass version of the more familiar Golden Rule. It says: one's actions must be based on a policy that is also right for everyone else to follow.

If our actions don't follow this rule, it means that we are not free, not in the Kantian sense: we are letting our baser desires or narrow self-interest drive us. If I vote for a politician mainly because she's promising a tax break that benefits me, I'm not voting freely—I'm voting under the dictate of monetary greed or need.

If we are in broad agreement with the discussion so far, the question then becomes: Are there ways we can practice becoming more choiceful and moral in our actions?

Before we head there, let's check in on AI: how do choice and freedom apply to machines? AI appears to have the opposite problem of animals: it can think (maybe), but it has no desire. And so it doesn't start anything, and is not an actor in its own right. A human needs to prompt it, say, by asking it a question a la ChatGPT. I have no idea how one might go about jumpstarting desire in an AI system.

Staying Human

As we contemplate how we might work on our choicefulness, it can be instructive to look at what leads to choice-less-ness. Here's Russell Brand writing eloquently about addiction6:

You are likely one of the 90% of people who can drink and use drugs safely. I have friends who can smoke weed, swill gin, even do crack and then merrily get on with their lives. For me, this is not an option. I will relinquish all else to ride that buzz to oblivion. Even if it began as a timid glass of chardonnay on a ponce's yacht, it would end with me necking the bottle, swimming to shore and sprinting to Bethnal Green in search of a crack house.

Even if we are among the 90% of people who don't have a substance abuse problem, I would wager that every one of us has some weak spot that leads to temporary choicelessness. If we stop feeling regret over these failings, Aristotle would say we've slipped into vice. His prescribed safeguard is virtue7:

...virtue keeps the source safe, while vice destroys it, and in actions the source is that for the sake of which one acts ... so neither there nor here is reason able to teach anyone the sources, but here it is virtue, either natural or habituated, that directs one to right opinion about the source.

In other words, it is not enough to just know the theory behind freedom and morality. One needs to work on it by practicing the virtues as occasion allows. Virtue might sound like an outdated concept from when there were swords and shields, or dueling pistols like in Hamilton. But there are many everyday virtues that Aristotle lists that remain relevant. For example, there's friendliness: being nice and charming in social situations; without being too disagreeable and contrary on the one hand, or too obsequious and flattering on the other.

We can even be creative with the sword-and-shield kinds of virtue. For example, we can practice courage by signing up for some competitive sport activity at the right level of challenge, and doing our best to meet it, given our other obligations. For me at the moment, this might be running a half-marathon in the fall after properly addressing a health issue this summer, and aiming for a time that at least matches my previous showing.

In Aristotle's scheme, virtue is not straightforward—it requires wisdom to chart the middle course between opposing vices8. Consider the virtue of generosity, which becomes germane as the end of the year approaches and it is time for gifts. Who to give to, how much and when, what's deserved, what's stingy vs. what's wasteful is not always obvious. But by making the attempt, we live a more choice-ful life, a more human life.

As with desire, no one's seriously ascribed virtue to an AI system. But one can make an analogy between vice corrupting a human and poor training that degrades an AI's performance. Take the following example, where I ask the same question of Google's Bard AI and my company's ChatLaw AI9:

Question: My student loan payments resume this September, which would need me to downsize my rental apartment and cause hardship for my family. Is declaring bankruptcy a good idea?

Bard: I'm sorry to hear that you're in a difficult situation. It's important to weigh all of your options carefully before deciding whether or not to declare bankruptcy.

Declaring bankruptcy can have a number of negative consequences, including: Damage to your credit score [...]

ChatLaw: Declaring bankruptcy to discharge student loans is a complex process and should be considered carefully. Under the U.S. Bankruptcy Code, student loans are generally not dischargeable, except if the debtor can demonstrate that repaying the loan would impose an "undue hardship" on the debtor and his or her dependents (11 U.S.C. 523(a)(8)).

The courts have developed a test known as the Brunner test (Brunner v. New York State Higher Education Services Corp., 831 F.2d 395 (2d Cir. 1987)) to determine whether a debtor can discharge student loans due to undue hardship. The test requires the debtor to show: [...]

Bard's reply in its entirety did not contain anything I didn't know already, whereas ChatLaw's was full of informative detail. The AI system behind ChatLaw is not natively more capable than Google's Bard10. At least, I don't think. It's just that Google has dumbed theirs down by being too aggressive with a training step called RLHF.

Reinforcement Learning Through Human Feedback follows an initial, much bigger, training step. The initial training step feeds the AI model many billions-worth of words from the Internet, to teach it language and content. RLHF is a smaller subsquent training step done in a more deliberate manner using human supervision. It teaches the AI the format of a Q&A exchange, and the style of answers people like in such an exchange. If you insist too strongly on a particular style—e.g. simplicity—you can neuter the competency of the underlying AI model. Just like vice damages the human.

Revealing the Human: Meditation and Art

Meditation was sold by its early proponents as a vehicle towards liberation of a person from their ego. What I found for myself, several years into the practice, was something else: the noumenal texture of my experience as a person, that I had begun to notice through all that sitting in silence, felt so basic that I became convinced that it must be shared by everyone else. It wasn't my personal ego that was uncovered, but something wholesome and universal that lay underneath it. I was reminded of this universality when the gentleman and I locked eyes briefly that Sunday morning.

Meditation can thus be a means to reveal our underlying common humanity. Perhaps art has a similar function. We shall explore these ideas in the next issue. We will also discuss hallucination in AI models, because an analogy can be drawn there to humans meditating.

Thank you for your company so far. To be continued...

This analogy is due Roger Scruton's, in his On Human Nature, Ch 1. 2017.

Ibid. From my notes: use of language (Chomsky, Bennet), second-order desires (Frankfurt), second-order intentions (Grice), convention (Lewis), freedom (Kant, Sartre), self-consciousness (Kant, Fichte, Hegel), laughing and crying (Plessner), cultural learning (Tomasello).

Nicomachean Ethics, Aristotle. tr. Joe Sachs. Bk 6, Ch 2.

Ibid. Bk 3, Ch 5.

Critique of Practical Reason. Immanuel Kant, 1788. As explained in Kant: A Very Short Introduction, Ch 5. Roger Scruton, 2001.

Nicomachean Ethics, Aristotle. tr. Joe Sachs. Bk 7, Ch 8.

Ibid. Bk 2.

https://www.chatlaw.us. Q&A with AI Trained on Bankruptcy Law.

Brought to you by Copula AI.

Though we did a fair bit of engineering to make its responses more suitable for lawyers. I am exaggerating for effect.

Interesting essay. I think human education is very similar to AI training. I believe what will keep humans distinct from AI is spirituality and the idea that we humans accept to live under the watchful eye of some paradoxical authority (be it virtue, conscience, or god), something which can’t be reduced to a utility function.